CAVEON SECURITY INSIGHTS BLOG

The World's Only Test Security Blog

Pull up a chair among Caveon's experts in psychometrics, psychology, data science, test security, law, education, and oh-so-many other fields and join in the conversation about all things test security.

The Conference on Test Security (COTS) Recap: October 2021

Posted by Cicek Svensson

updated over a week ago

Notable Discussions from the Conference on Test Security

Since 2012, the Conference on Test Security (COTS) has gathered testing professionals to discuss the capabilities and enhancements in test security that protect the validity of test results and brand integrity. COTS is the only conference dedicated entirely to test security. As a result, it’s filled with valuable content relevant to a variety of organizations interested in exploring emerging test security advancements and research.

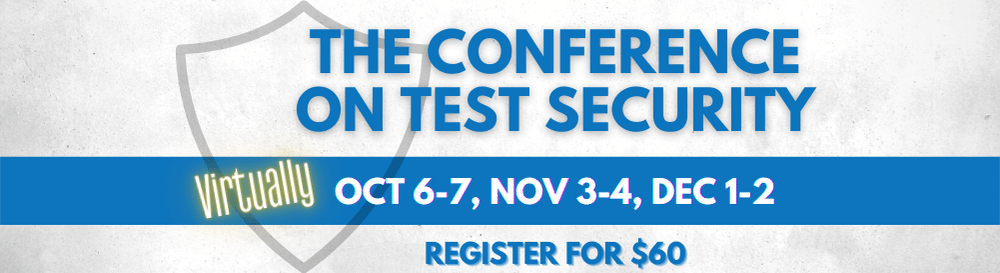

The 2021 conference is held virtually across three dates: Oct. 6-7, Nov. 3-4, and Dec. 1-2. (You can still register to attend!) This blog covers a few notable discussions from the first session of COTS:

- Discussions on the Security Implications for Multi-Modal Delivery

- Sessions on Test Security Processes

- Discussions About Remote Proctored Exams

- Sessions on Data Forensics and Item Disclosure

- Sessions on Data Forensics for Monitoring and Investigations

- And More

Discussions on the Security Implications for Multi-Modal Delivery

Day 1 of the October session of COTS kicked off with a panel discussion about security implications for multi-modal delivery. Many assessment programs offer a hybrid model of testing with both computer- and paper-based tests. And with all the different delivery models that are out there, new complications are expected to arise.

One such complication is that, as remote options are becoming more and more popular, behaviors such as unscheduled breaks are becoming a problem. During unscheduled breaks, proctors don’t have a way of knowing who the candidate is interacting with or if they are interacting with someone about the test. Because these behaviors are uncertain and unverified, it is important to be cautious about any counterproductive behavior or accusations. It is imperative to define the candidate’s actions from a recorded exam session, as behaviors can be misinterpreted (e.g., the candidate may use the phone to call tech support, a family member may enter the room to ask a simple question, bandwidth issues may occur, etc.)

The speakers also touched upon new ways candidates could make a testing situation more problematic when taking the exam remotely (e.g., not plugging in the power cord to their computer, reading the questions aloud, or having their parent proofread their exam). Another element where companies have had to adapt is in communication, specifically with younger demographics. Instead of emailing students about exam preparation and other important information, social media platforms and text messages have been a better option to leverage content-relevant information.

Sessions on Test Security Processes

Conference presentations on the first day of COTS also touched on test security processes. Some sessions focused on different ways of assessing threats and creating solutions for evaluating results. Others focused on the methods such as automated item generation (AIG), linear on-the-fly testing (LOFT), authentication protocols during remote proctoring, data forensics, proctoring audits, and investigative services.

One such session noted the most common security threats for IT certifications:

-

- Test theft (e.g., examinees steal test content by memorizing or recording test sessions)

- Pre-knowledge of exam content

- Proxy testing (having a friend or a family member take the test for you)

- Cheating in other forms (see the full list of theft and cheating threats in this document)

This same session also laid out a few ways programs can prevent these threats from taking place:

-

- By blocking screen keys (e.g., prohibiting examinees from using hotkeys for copying and pasting text)

- Through watermarking technology

- With biometric keystroke security measures

- Through live proctor authentication procedures

- By requiring valid government-issued IDs

Discussions About Remote-Proctored Exams

Another session that day focused on a case study on the USMLE remotely-proctored exam. This presentation centered on making testing condition decisions and risk assessments. For this study, four options were evaluated:

-

- A remotely-proctored USMLE exam

- Two short-term, in-person USMLE scenarios

- “Opportunity loss”– waiting on proctoring centers to reopen

Hypothetical risk assessment categories were identified. These categories were ranked from “low” to “high” based on how easily outside information could be accessed during an exam, the increased potential for proxy test takers, and the reduced level of physical security controls.

This case study pointed out that the security risk category—along with the legal, psychometric, and reputational categories—were instrumental in conveying the expert judgment to allow decision-makers the ability to compare risks. It also relayed that it is important to have a substantial vendor selection and thorough vetting to be part of the risk assessment process. Technological capabilities played a significant role in risk likelihood and impact. The solutions would need to be tailored to the exam programs, especially when test security concerns are different across each program.

Sessions on Data Forensics and Item Disclosure

Conference presentations on the second day of COTS started with a session about using data forensics to manage item disclosure. Item disclosure involves test items being exposed on different braindump sites online. It remains one of the greatest threats to test security and the validity of test scores. To reduce these threats and control how much of your items are out there, monitoring the web can be an excellent method to find disclosed items. In combination, data forensics analysis provides useful information for detecting disclosed content and crafting an appropriate response. It affects p-values, response times, pass rates and mean scores, and other test result data.

The speakers presented data forensics methods designed to detect these types of anomalies. The presenters also discussed ways to utilize the information from data forensics analyses to manage occurrences of item disclosure.

In order to effectively manage item disclosure, efforts must be made to devalue the disclosed content and measure the extent to which the disclosed content is being used. Disclosed test content is devalued when the disclosed items are removed from service and replaced by other items. Data forensics analysis can give insight into how quickly items are disclosed. This information can be used to inform a testing program’s schedule for rotating items and republishing exams. Monitoring item performance, score differences, and cluster sizes over time can give valuable insights into how usage of disclosed test content spreads among a testing population.

Sessions on Data Forensics for Monitoring and Investigations

The afternoon session of the second day of COTS continued on the path of data forensics, this time about how data forensics can be used as a multi-tool for monitoring and investigations. The speakers emphasized the importance of using statistical methods that are based on the threat that is being received or suspected. Then, from there, programs can determine how often to analyze their data based on the actions and threats they desire to take.

The statistics that were suggested in this session were similarity, identical, and perfect and aberrant scores. It is important to note that with data forensics, score validity is what is being measured and assessed—not cheating. Additionally, depending on the outcome of the analysis, different programs have different appetites for using data forensics analyses. The use of the results ranges from identifying areas to actual invalidations. The policies and procedures of the exam program should support the use of data forensics to ensure they are clear and defensible. A combination of both statistical reporting and investigative evidence is necessary for it to be legally defensible.

Conclusion

Test security is something that’s always evolving—it’s a process that keeps changing as aberrant test takers get more creative through time and technology. The Conference on Test Security aims to create an enriching environment where the capabilities and enhancements in test security can be discussed and where ideas that protect the validity of test scores can be shared.

We invite you to register to attend the two additional COTS conference dates. We look forward to learning with you on November 3-4 and December 1-2.

Cicek Svensson

A business psychologist and former Chairman of the Board for the Association of Test Publishers (ATP), Svensson has spent more than 14 years in the testing industry and will bring her wealth of experience to bear in helping Caveon clients address their test security concerns and improve the validity of their test scores.

View all articlesAbout Caveon

For more than 18 years, Caveon Test Security has driven the discussion and practice of exam security in the testing industry. Today, as the recognized leader in the field, we have expanded our offerings to encompass innovative solutions and technologies that provide comprehensive protection: Solutions designed to detect, deter, and even prevent test fraud.

Posts by Topic

- Test Security Basics (34)

- Detection Measures (29)

- K-12 Education (27)

- Online Exams (21)

- Test Security Plan (21)

- Higher Education (20)

- Prevention Measures (20)

- Test Security Consulting (20)

- Certification (19)

- Exam Development (19)

- Deterrence Measures (15)

- Medical Licensure (15)

- Web Monitoring (12)

- DOMC™ (11)

- Data Forensics (11)

- Investigating Security Incidents (11)

- Test Security Stories (9)

- Security Incident Response Plan (8)

- Monitoring Test Administration (7)

- SmartItem™ (7)

- Automated Item Generation (AIG) (6)

- Braindumps (6)

- Proctoring (4)

- DMCA Letters (2)

Recent Posts

SUBSCRIBE TO OUR NEWSLETTER

Get expert knowledge delivered straight to your inbox, including exclusive access to industry publications and Caveon's subscriber-only resource, The Lockbox.

-1.png?width=846&name=Lockbox%20Email%20Banners%20(15)-1.png)